目录

- 一、背景介绍

- 二、现象描述

- 三、问题分析

- 1.一键启动配置是否出现问题

- 2.异常:从节点自动关闭或掉线

- 总结

一、背景介绍

现在虚拟机node1,node2,node3

使用:start-dfs.sh 一键启动HDFS集群

二、现象描述

1.查看node1的进程启动情况

[root@node1 ~]#start-dfs.sh [root@node1 ~]# jps 4145 Jps 2102 NameNode 2247 DataNode [root@node1 ~]#

2.查看node2的进程启动情况

[root@node2 logs]# jps 3828 Jps 1800 DataNode [root@node2 logs]#

3.查看node3的进程启动情况

[root@node3 ~]# jps 3428 Jps [root@node3 ~]#

发现问题:node3的datanode从节点没有启动

三、问题分析

1.一键启动配置是否出现问题

一般系统配置不会出错,第一次启动成功就说明是好的。

2.异常:从节点自动关闭或掉线

2.1.通过查看node3日志去排查问题

日志路径:/export/server/hadoop-3.3.0/logs/索要查看的日志文件

[root@node3 ~]# cd /export/server/hadoop-3.3.0/logs

[root@node3 logs]# cat hadoop-root-datanode-node3.itcast.cn.log2023-06-06 07:24:31,896 ERROR org.apache.hadoop.hdfs.server.datanode.DataNode: RECEIVED SIGNAL 1: SIGHUP2023-06-06 07:24:31,907 ERROR org.apache.hadoop.hdfs.server.datanode.DataNode: RECEIVED SIGNAL 15: SIGTERM2023-06-06 07:24:31,914 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: SHUTDOWN_MSG: /************************************************************SHUTDOWN_MSG: Shutting down DataNode at node3.itcast.cn/192.168.88.153************************************************************/[root@node3 logs]#

注意:在查看日志的时候,上述方式会显示从第一天启动开始到现在的日志,这是一个很庞大的数据过程。

所以通过如下方式去查看node3的日志:

- ①vim 进入到日志文件

- ②命令模式:通过/关键时间定位到固定日志查看

[root@node3 logs]# ll

total 7020-rw-r--r-- 1 root root 6950235 Jun 6 11:31 hadoop-root-datanode-node3.itcast.cn.log-rw-r--r-- 1 root root 692 Jun 6 11:26 hadoop-root-datanode-node3.itcast.cn.out-rw-r--r-- 1 root root 692 Jun 5 21:33 hadoop-root-datanode-node3.itcast.cn.out.1-rw-r--r-- 1 root root 692 Jun 5 18:15 hadoop-root-datanode-node3.itcast.cn.out.2-rw-r--r-- 1 root root 692 Jun 5 07:15 hadoop-root-datanode-node3.itcast.cn.out.3-rw-r--r-- 1 root root 692 Jun 4 22:44 hadoop-root-datanode-node3.itcast.cn.out.4-rw-r--r-- 1 root root 205640 Jun 5 19:33 hadoop-root-nodemanager-node3.itcast.cn.log-rw-r--r-- 1 root root 2201 Jun 5 18:19 hadoop-root-nodemanager-node3.itcast.cn.out-rw-r--r-- 1 root root 2201 Jun 5 07:23 hadoop-root-nodemanager-node3.itcast.cn.out.1-rw-r--r-- 1 root root 0 Jun 4 22:44 SecurityAuth-root.auditdrwxr-xr-x 2 root root 6 Jun 5 07:40 userlogs[root@node3 logs]# vim hadoop-root-datanode-node3.itcast.cn.log2023-06-05 21:33:37,872 INFO org.apache.hadoop.hdfs.server.datanode.fsdataset.impl.FsDatasetImpl: Adding block pool BP-389489230-192.168.88.151-16858886658112023-06-05 21:3编程客栈3:37,873 INFO org.apache.hadoop.hdfs.server.datanode.fsdataset.impl.FsDatasetImpl: Scanning block pool BP-389489230-192.168.88.151-1685888665811 on volume /export/data/hadoop-3.3.0/dfs/data...2023-06-05 21:33:37,913 INFO org.apache.hadoop.hdfs.server.datanode.fsdataset.impl.FsDatasetImpl: Time taken to scan block pool BP-389489230-192.168.88.151-1685888665811 on /export/data/hadoop-3.3.0/dfs/data: 41ms2023-06-05 21:33:37,913 INFO org.apache.hadoop.hdfs.server.datanode.fsdataset.impl.FsDatasetImpl: Total time to scan all replicas for block pool BP-389489230-192.168.88.151-1685888665811: 42ms2023-06-05 21:33:37,915 INFO org.apache.hadoop.hdfs.server.datanode.fsdataset.impl.FsDatasetImpl: Adding replicas to map for block pool BP-389489230-192.168.88.151-1685888665811 on volume /export/data/hadoop-3.3.0/dfs/data...2023-06-05 21:33:37,915 INFO org.apache.hadoop.hdfs.server.datanode.fsdataset.impl.BlockPoolSlice: Replica Cache file: /export/data/hadoop-3.3.0/dfs/data/current/BP-389489230-192.168.88.151-1685888665811/current/replicas doesn't exist 2023-06-05 21:33:37,930 INFO org.apache.hadoop.hdfs.server.datanode.fsdataset.impl.FsDatasetImpl: Time to add replicas to map for block pool BP-389489230-192.168.88.151-1685888665811 on volume /export/data/hadoop-3.3.0/dfs/data: 16ms2023-06-05 21:33:37,930 INFO org.apache.hadoop.hdfs.server.datanode.fsdataset.impl.FsDatasetImpl: Total time to add all replicas to map for block pool BP-389489230-192.168.88.151-1685888665811: 16ms2023-06-05 21:33:37,931 INFO org.apache.hadoop.hdfs.server.datanode.checker.ThrottledAsyncChecker: Scheduling a check for /export/data/hadoop-3.3.0/dfs/data2023-06-05 21:33:37,941 INFO org.apache.hadoop.hdfs.server.datanode.checker.DatasetVolumeChecker: Scheduled health check for volume /export/data/hadoop-3.3.0/dfs/data2023-06-05 21:33:37,952 INFO org.apache.hadoop.hdfs.server.datanode.VolumeScanner: VoljavascriptumeScanner(/export/data/hadoop-3.3.0/dfs/data, DS-151efa3b-8d41-483c-91d3-24c93d2871a2): no suitable block pools found to scan. Waiting 1732279321 ms.2023-06-05 21:33:37,955 WARN org.apache.hadoop.hdfs.server.datanode.DirectoryScanner: dfs.datanode.direphpctoryscan.throttle.limit.ms.per.sec set to value above 1000 ms/sec. Assuming default value of -12023-06-05 21:33:37,955 INFO org.apache.hadoop.hdfs.server.datanode.DirectoryScanner: Periodic Directory Tree Verification scan starting in 7915082ms with interval of 21600000ms and throttle limit of -1ms/s2023-06-05 21:33:37,961 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Block pool BP-389489230-192.168.88.151-1685888665811 (Datanode Uuid e7c67709-7680-4f33-81ed-50f6bbf48b46) service to node1/192.168.88.151:8020 beginning handshake with NN2023-06-05 21:33:37,997 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Block pool BP-389489230-192.168.88.151-1685888665811 (Datanode Uuid e7c67709-7680-4f33-81ed-50f6bbf48b46) service to node1/192.168.88.151:8020 successfully registered with NN2023-06-05 21:33:37,998 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: For namenode node1/192.168.88.151:8020 using BLOCKREPORT_INTERVAL of 21600000msecs CACHEREPORT_INTERVAL of 10000msecs Initial delay: 0msecs; heartBeatInterval=30002023-06-05 21:33:38,138 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Successfully sent block report 0x45e611d10c32cfb7, containing 1 storage report(s), of which we sent 1. The reports had 4 total blocks and used 1 RPC(s). This took 5 msecs to generate and 83 msecs for RPC and NN processing. Got back one command: FinalizeCommand/5.2023-06-05 21:33:38,138 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Got finalize command for block pool BP-389489230-192.168.88.151-16858886658112023-06-06 07:24:31,896 ERROR org.apache.hadoop.hdfs.server.datanode.DataNode: RECEIVED SIGNAL 1: SIGHUP2023-06-06 07:24:31,907 ERROR org.apache.hadoop.hdfs.server.datanode.DataNode: RECEIVED SIGNAL 15: SIGTERM2023-06-06 07:24:31,914 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: SHUTDOWN_MSG: /************************************************************SHUTDOWN_MSG: Shutting down DataNode at node3.itcast.cn/192.168.88.153************************************************************/[root@node3 logs]# jps3458 Jps[root@node3 logs]# cd /export/server/hadoop-3.3.0/sbin[root@node3 sbin]# lltotal 108-rwxr-xr-x 1 root root 2756 Jun 4 21:10 distribute-exclude.shdrwxr-xjsr-x 4 root root 36 Jun 4 21:10 FederationStateStore-rwxr-xr-x 1 root root 1983 Jun 4 21:10 hadoop-daemon.sh-rwxr-xr-x 1 root root 2522 Jun 4 21:10 hadoop-daemons.sh-rwxr-xr-x 1 root root 1542 Jun 4 21:10 httpfs.sh-rwxr-xr-x 1 root root 1500 Jun 4 21:10 kms.sh-rwxr-xr-x 1 root root 1841 Jun 4 21:10 mr-jobhistory-daemon.sh-rwxr-xr-x 1 root root 2086 Jun 4 21:10 refresh-namenodes.sh-rwxr-xr-x 1 root root 1779 Jun 4 21:10 start-all.cmd-rwxr-xr-x 1 root root 2221 Jun 4 21:10 start-all.sh-rwxr-xr-x 1 root root 1880 Jun 4 21:10 start-balancer.sh-rwxr-xr-x 1 root root 1401 Jun 4 21:10 start-dfs.cmd-rwxr-xr-x 1 root root 5170 Jun 4 21:10 start-dfs.sh-rwxr-xr-x 1 root root 1793 Jun 4 21:10 start-secure-dns.sh-rwxr-xr-x 1 root root 1571 Jun 4 21:10 start-yarn.cmd-rwxr-xr-x 1 root root 3342 Jun 4 21:10 start-yarn.sh-rwxr-xr-x 1 root root 1770 Jun 4 21:10 stop-all.cmd-rwxr-xr-x 1 root root 2166 Jun 4 21:10 stop-all.sh-rwxr-xr-x 1 root root 1783 Jun 4 21:10 stop-balancer.sh-rwxr-xr-x 1 root root 1455 Jun 4 21:10 stop-dfs.cmd-rwxr-xr-x 1 root root 3898 Jun 4 21:10 stop-dfs.sh-rwxr-xr-x 1 root root 1756 Jun 4 21:10 stop-secure-dns.sh-rwxr-xr-x 1 root root 1642 Jun 4 21:10 stop-yarn.cmd-rwxr-xr-x 1 root root 3083 Jun 4 21:10 stop-yarn.sh-rwxr-xr-x 1 root root 1982 Jun 4 21:10 workers.sh at Java.io.FilterInputStream.read(FilterInputStream.java:133) at java.io.BufferedInputStream.fill(BufferedInputStream.java:246) at java.io.BufferedInputStream.read(BufferedInputStream.java:265) at java.io.FilterInputStream.read(FilterInputStream.java:83) at java.io.FilterInputStream.read(FilterInputStream.java:83) at org.apache.hadojsop.ipc.Client$Connection$PingInputStream.read(Client.java:562) at java.io.DataInputStream.readInt(DataInputStream.java:387) at org.apache.hadoop.ipc.Client$IpcStreams.readResponse(Client.java:1881) at org.apache.hadoop.ipc.Client$Connection.receiveRpcResponse(Client.java:1191) at org.apache.hadoop.ipc.Client$Connection.run(Client.java:1087)2023-06-05 00:03:05,799 ERROR org.apache.hadoop.hdfs.server.datanode.DataNode: RECEIVED SIGNAL 15: SIGTERM2023-06-05 00:03:05,805 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: SHUTDOWN_MSG:/************************************************************SHUTDOWN_MSG: Shutting down DataNode at node3.itcast.cn/192.168.88.153************************************************************/2023-06-05 07:15:43,274 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: STARTUP_MSG:/************************************************************STARTUP_MSG: Starting DataNodeSTARTUP_MSG: host = node3.itcast.cn/192.168.88.153STARTUP_MSG: args = []STARTUP_MSG: version = 3.3.0@ @ /2023-06-05

- 2.1.1.报错信息解析

报错信息:2023-06-06 07:24:31,896 ERROR org.apache.hadoop.hdfs.server.datanode.DataNode: RECEIVED SIGNAL 1: SIGHUP

①apache.hadoop.hdfs.server.datanode.DataNode:接收到的信号

②SIGHUP签约雇用,签约参加

③apache.hadoop软件框架

报错信息:2023-06-06 07:24:31,907 ERROR org.apache.hadoop.hdfs.server.datanode.DataNode: RECEIVED SIGNAL 15: SIGTERM

①hdfs.server.datanode.DataNode: 配置数据存放的路径

②RECEIVED SIGNAL 15: SIGTERM=》接收信号15:sigterm

③15: SIGTERM=》signal 15含意是使用不带参数的kill命令时终止进程,

- 2.1.2.解决:

初步判断,由于文件数据块的原因造成datanode失联,手动在node3执行如下命令,重新启动node3上面的datanode节点:

hadoop dfsadmin -refreshNode

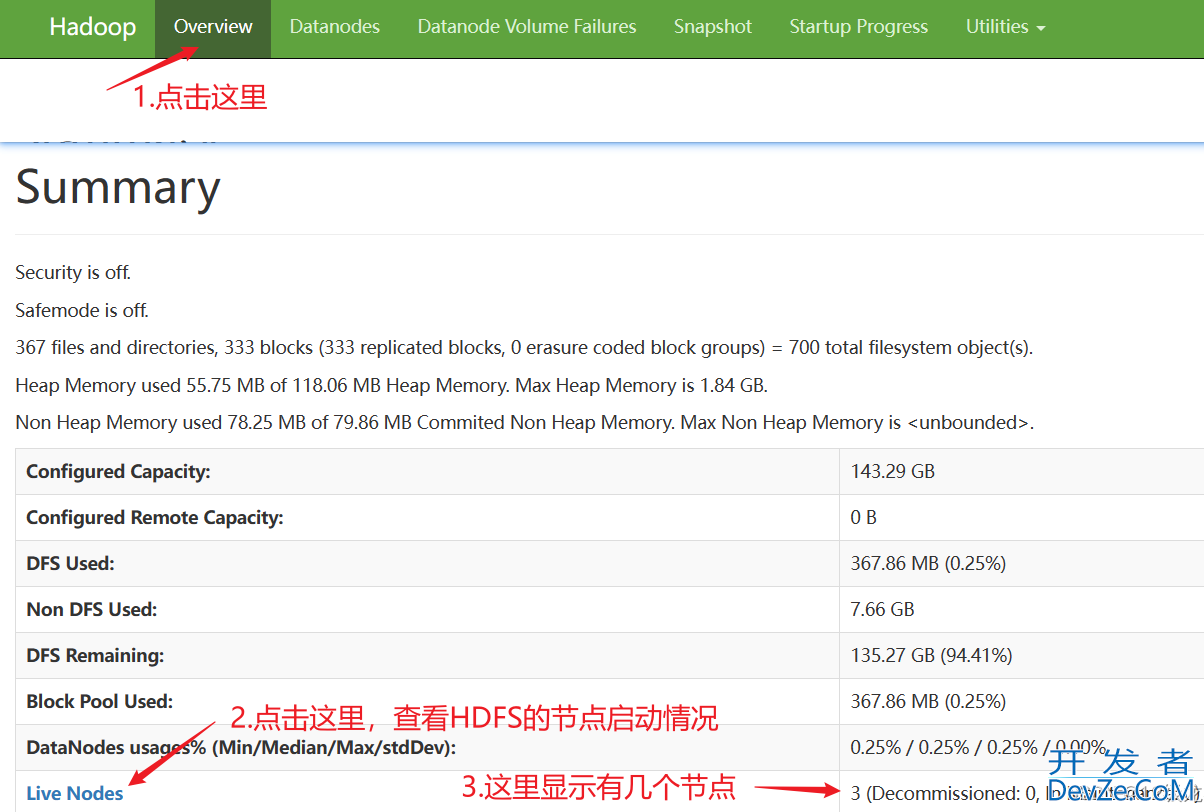

2.2.通过HDFS浏览器网页去查看

step1:

step2:

step3:解决:

手动在node3执行如下命令,重新启动node3上面的datanode节点:

hadoop dfsadmin -refreshNode

总结

以上为个人经验,希望能给大家一个参考,也希望大家多多支持编程客栈(www.devze.com)。

加载中,请稍侯......

加载中,请稍侯......

精彩评论