在做实验时,我们常常会使用用开源的数据集进行测试。而Pytorch中内置了许多数据集,这些数据集我们常常使用DataLoader类进行加载。

DataLoader类加载torch.vision中的FashionMNIST数据集。

from torch.utils.data import DataLoader from torchvision import datasets from torchvision.transforms import ToTensor import matplotlib.pyplot as plt training_data = datasets.FashionMNIST( root="data", train=True, download=True, transform=ToTensor() ) test_data = datasets.FashionMNIST( root="data", train=False, download=True, transform=ToTensor() )

我们接下来定义Dataloader对象用于加载这两个数据集:

train_dataloader = DataLoader(training_data, batch_size=64, shuffle=True) test_dataloader = DataLoader(test_data, batch_size=64, shuffle=True)

那么这个train_dataloader究竟是什么类型呢?

print(type(train_dataloader)) # <class 'torch.utils.data.dataloader.DataLoader'>

我们可以将先其转换为迭代器类型。

print(type(iter(train_dataloader)))# <class 'torch.utils.data.dataloader._SingleProcessDataLoaderIter'>

然后再使用next(iter(train_dataloader))从迭代器里取数据,如下所示:

train_features, train_labels = next(iter(train_dataloader))

print(f"Feature batch shape: {train_features.size()}")

print(f"Labels batch shape: {train_labels.size()}")

img = train_features[0].squeeze()

label = train_labels[0]

plt.imshow(img, cmap="gray")

plt.show()

print(http://www.cppcns.comf"Label: {label}")

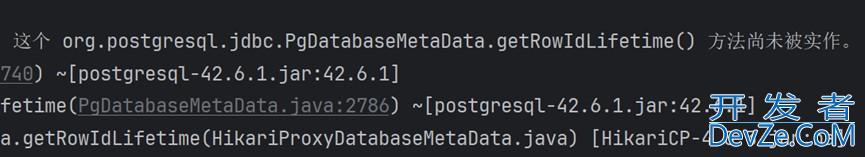

可以看到我们成功获取了数据集中第一张图片的信息,控制台打印:

Feature batch shape: torch.Size([64, 1, 28, 28]) Labels batch shape: torch.Size([64]) Label: 2

图片可视化显示如下:

不过有读者可能就会产生疑问,很多时候我们并没有将DataLoader类型强制转换成迭代器类型呀,大多数时候我们会写如下代码:

for train_features, train_labels in train_dataloader:

print(train_features.shapwww.cppcns.come) # torch.Size([64, 1, 28, 28])

print(train_features[0].shape) # torch.Size([1, 28, 28])

print(train_features[0].squeeze().shape) # torch.Size([28, 28])

img = train_features[0].squeeze()

label = train_labels[0]

plt.imshow(img, cmap="gray")

plt.show()

print(f"Label: {label}")

可以看到,该代码也能够正常迭代训练数据,前三个样本的控制台打印输出为:

torch.Size([64, 1, 28, 28]) torch.Size([1, 28, 28]) torch.Size([28, 28]) Label: 7 torch.Size([64, 1, 28, 28]) torch.Size([1, 28, 28]) torch.Size([28, 28]) Label: 4 torch.Size([64, 1, 28, 28]) torch.Size([1, 28, 28]) torch.Size([28, 28]) Label: 1

那么为什http://www.cppcns.com么我们这里没有显式将Dataloader转换为迭代器类型呢,其实是python语言for循环的一种机制,一旦我们用for ... in ...句式来迭代一个对象,那么Python解释器就会偷偷地自动帮我们创建好迭代器,也就是说

for train_features, train_labels in train_dataloader:

实际上等同于

for train_features, train_labels in iter(train_dataloader):

更进一步,这实际上等同于

train_iterator = iter(train_dataloader)

try:

while True:

train_fwww.cppcns.comeatures, train_labels = next(train_iterator)

except StopIteration:

pass

推而广之,我们在用Python迭代直接迭代列表时:

for x in [1, 2, 3, 4]:

其实Python解释器已经为我们隐式转换为迭代器了:

list_iterator = iter([1, 2, 3, 4])

try:

while True:

x = next(list_iterator)

except StopIteration:

pass

到此这篇关www.cppcns.com于torch.utils.data.DataLoader与迭代器转换操作的文章就介绍到这了,更多相关torch.utils.data.DataLoader与迭代器转换内容请搜索我们以前的文章或继续浏览下面的相关文章希望大家以后多多支持我们!

加载中,请稍侯......

加载中,请稍侯......

精彩评论